By David Barnett, Subject Matter Expert – Brand Monitoring

Phishing scams are nothing new in the online security world and show no signs of subsiding. The scam starts when a fraudster sends a communication purporting to originate from a trusted provider and encourages the recipient, often with a conveyed sense of urgency, to click a link. That link leads to a fake site, usually intended to collect confidential login credentials or other personal information. In similar scams, the mail may encourage the recipient to open an attachment loaded with malicious content.

The communications are often sent to a large list of recipients whose contact details have usually been “harvested” from the internet (databases with this kind of information are tradable commodities in their own right). The fraudsters hope that some of the recipients will be genuine customers of the organization being impersonated. In other cases, personal details may be obtained via a breach of a company’s customer database, allowing the fraudster to construct a much more tailored attack.

The COVID pandemic has provided many additional opportunities to fraudsters through the increased use of online channels by individuals working from home during lockdown. COVID-related lures encourage users seeking information, reassurance, and assistance to click on links. The Anti Phishing Working Group (APWG) found that in just the second quarter of 2020, almost 147,000 phishing sites were detected, with more than 350 distinct brands targeted each month. These sites crossed a range of industry verticals, topped by Software-as-a-Service and financial services[1]. Similar trends were also reported for Q4[2].

As long as phishing attacks generate revenue for criminals, the practice will perpetuate. It continues to evolve by using new “hooks,” communication channels, and increasingly clever ways of creating convincing fake content. This highlights the need for brands to defend their customer base by using monitoring and takedown technology. Nearly 60% of organizations worldwide reported that they had experienced a successful phishing attack during 2020[3].

A recent phishing example—and identification tips for consumers

Figure 1 shows an example of a phishing communication sent by SMS. Text messaging remains a popular channel for distributing phishing content, with many brands (including numerous examples outside the most-attacked industries) reported as having been targeted in this way during 2021. The U.K.’s Royal Mail, for example, saw an overall 645% increase in phishing activity for March 2021, compared with the previous month[4],[5].

Figure 1: Example of an SMS-based phishing attack targeting HSBC customers, received on April 25, 2021

The example shown in Figure 1, targeting HSBC customers, incorporates several elements making it a relatively convincing example of a phishing scam. The link features a cursory similarity to an official link for HSBC U.K. (whose official domain name is hsbc.co.uk). The link also incorporates the HTTPS prefix, and the communication lacks much of the poor spelling and grammar often present in phishing scams.

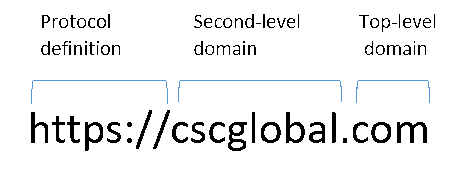

There are, however, many tell-tale elements consumers should look out for. The key point is that the URL is not actually hosted on an official HSBC site. The place to look is in the domain name part of the URL, consisting of a top-level domain (TLD)—or extension—such as .com or .co.uk, and (separated by a dot) the second-level domain name which immediately precedes it. In a URL, this occurs immediately before the first slash (/) after the initial protocol definition (in this case, https://), or in cases where there is no slash, at the very end of the URL.

In the HSBC scam here, the domain name is actually “uk-account.help,” a domain name wholly independent of anything owned by HSBC and under the control of a third-party fraudster. The owner of this domain can create whatever subdomain string (the part before the domain name) they wish, and so has constructed the fake site at “hsbc.co.uk-account.help,” which superficially appears very similar to “hsbc.co.uk/account/help,” a URL which would be part of the official hsbc.co.uk site.

In other cases, scammers may register domains that use the actual brand name of the targeted organization but weren’t included in the brand owner’s domain portfolio (e.g., using a version with additional keywords or a different domain extension). The phony domain could feature a brand variant or misspelling as a way of creating a convincing URL.

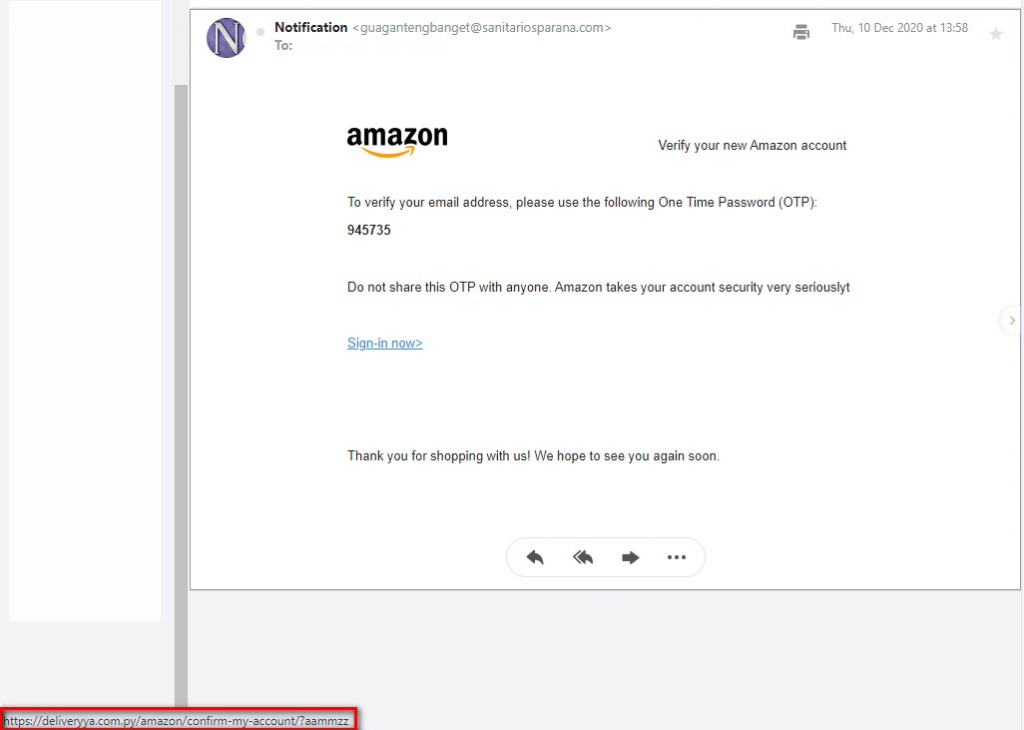

Phishing communications that use “richer” formatting (e.g., HTML emails) can construct a message where the visible link may be a URL from the brand owner’s official site, but the destination URL accessed by clicking the link is a distinct and fraudulent address. In these cases, users should hover over the link with a mouse, which usually shows the actual destination URL.

Figure 2: Example of HTML email showing the actual destination URL (highlighted in red) revealed by ‘mouse-overing’ the link

In addition to the non-legitimate domain name, other factors in the HSBC SMS scam—such as the message originating from a standalone mobile number and the communication text including all of the textbook elements of a phishing scam (e.g. a legitimate service provider would almost never send an unpersonalized communication to a customer)—should also raise red flags.

This particular scam also incorporates a couple of additional interesting elements. First, the fraudulent domain is hosted on the .help extension, one of the newer gTLDs launched in 2014[6]. This may be less immediately recognizable as an extension—and therefore part of the domain name itself—to users more familiar with the traditional extensions such as .com and .co.uk.

Second, the embedded link uses the secure HTTPS protocol, frequently tipped as a point to check when verifying a link’s authenticity. Although it generally indicates that the web traffic is encrypted during transit, it doesn’t guarantee that the destination site is genuine. There are many budget providers of digital certificates (which enable a site to use the HTTPS protocol), that will sell them without any checks on the legitimacy of the site in question. In fact in Q2 2020, 78% of phishing sites were found to be employing digital certificates, up from 5% at the end of 20161.

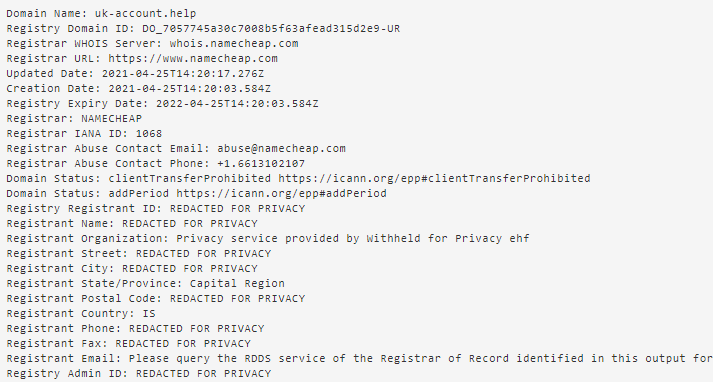

The final notable element of this scam is that the domain in question (uk-account.help), despite only being registered on April 25 (see Figure 3), had actually been deregistered by the following day (and accordingly no longer resolved to any live site). Although the brand owner (or an associated brand-protection service provider) may have quickly taken the site down, the use of a domain and related phishing site that is so short-lived is typical of fraudsters hoping to generate quick revenue before the brand owner can discover the scam.

Figure 3: Extract of the WHOIS (ownership) record for the fraudulent domain, showing that it was registered on April 25 (the same day on which the SMS was received), and using a privacy-protection service provider

Actions for brand owners: Detection and enforcement—and how CSC can help

CSC works with a number of brands to guard against this type of scam using advanced detection capabilities to provide early warning of active campaigns, and a range of enforcement options to ensure rapid takedown. This provides protection for customers and mitigates reputational damage and financial losses for the brand owner.

Fraudulent content can be identified in a number of ways. With a “classic” brand detection service, fake sites are found through internet metasearching or domain-name monitoring using zone-file analysis. For those organizations where phishing is a particular issue, a dedicated anti-fraud service can make use of a range of additional phishing detection techniques to maximise the likelihood of early detection. This may involve the use of:

- Spam traps and honeypots to intercept a cross section of spam emails with a view to identifying phishing emails

- Analysis of customers’ webserver logs to identify instances when fake sites draw content from, or redirect to, official sites

- Accepting feeds from dedicated mailboxes where customers can report scams

All of this content feeds into CSC’s phishing correlation engine, which automatically analyzes URL patterns and site content using machine learning to match candidate sites against previously established predictors of fraudulent content.

Having identified that a scam is active, the next step is rapid takedown. CSC can work with registrars and hosting providers—many of whom will accept proof of fraudulent activity as a contravention of their terms and conditions, and thus grounds for takedown—in addition to other industry bodies to ensure that a fake site is suspended. In other cases, it may be appropriate to work with providers to deactivate the source of the communications (i.e., the sender’s email address or originating telephone number), or to have the site blacklisted in online databases.

We’re ready to talk

If you’d like to find out more about CSC’s anti-fraud services, please complete a contact form, and one of our expert team members will call you to discuss.

[1] docs.apwg.org//reports/apwg_trends_report_q2_2020.pdf

[2] statista.com/statistics/266161/websites-most-affected-by-phishing/

[3] statista.com/statistics/1149241/share-organizations-worldwide-phishing-attack/

[4] itpro.co.uk/security/359176/645-increase-in-royal-mail-related-phishing-scams

[5] lancs.live/incoming/dangerous-text-scams-bank-smishing-20400193